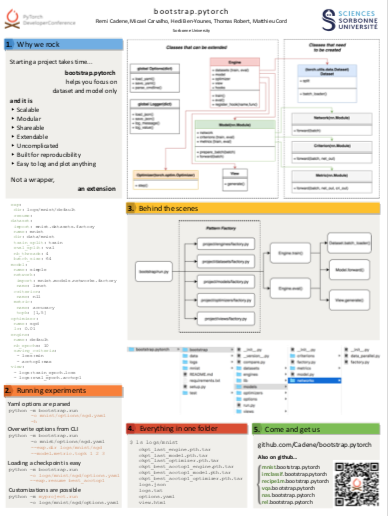

Bootstrap is a high-level framework for starting deep learning projects.

It aims at accelerating research projects and prototyping by providing a powerful workflow focused on your dataset and model only.

And it is:

- Scalable

- Modular

- Shareable

- Extendable

- Uncomplicated

- Built for reproducibility

- Easy to log and plot anything

It's not a wrapper over pytorch, it's a powerful extension.

Quick tour

To run an experiment (training + evaluation):

python -m bootstrap.run

-o myproject/options/sgd.yaml

To display parsed options from the yaml file:

python -m bootstrap.run

-o myproject/options/sgd.yaml

-h

Running an experiment will create 4 files, here is an example with mnist:

- options.yaml contains the options used for the experiment,

- logs.txt contains all the information given to the logger.

- logs.json contains the following data: train_epoch.loss, train_batch.loss, eval_epoch.accuracy_top1, etc.

- view.html contains training and evaluation curves with javascript utilities (plotly).

To save the next experiment in a specific directory:

python -m bootstrap.run

-o myproject/options/sgd.yaml

--exp.dir logs/custom

To reload an experiment:

python -m bootstrap.run

-o logs/custom/options.yaml

--exp.resume last

Documentation

The package reference is available on the documentation website.

It also contains some notes:

Official project modules

- mnist.bootstrap.pytorch is a useful example for starting a quick project with bootstrap

- vision.bootstrap.pytorch contains utilities to train image classifier, object detector, etc. on usual datasets like imagenet, cifar10, cifar100, coco, visual genome, etc.

- recipe1m.bootstrap.pytorch is a project for image-text retrieval related to the Recip1M dataset developped in the context of a SIGIR18 paper.

- block.bootstrap.pytorch is a project focused on fusion modules related to the VQA 2.0, TDIUC and VRD datasets developped in the context of a AAAI19 paper.