SpiderKeeper-2-1

This is a fork of SpiderKeeper. Here is the changes

0.2.5 (2018-09-17)

- add APScheduler executor & process options

A scalable admin ui for spider service

Features

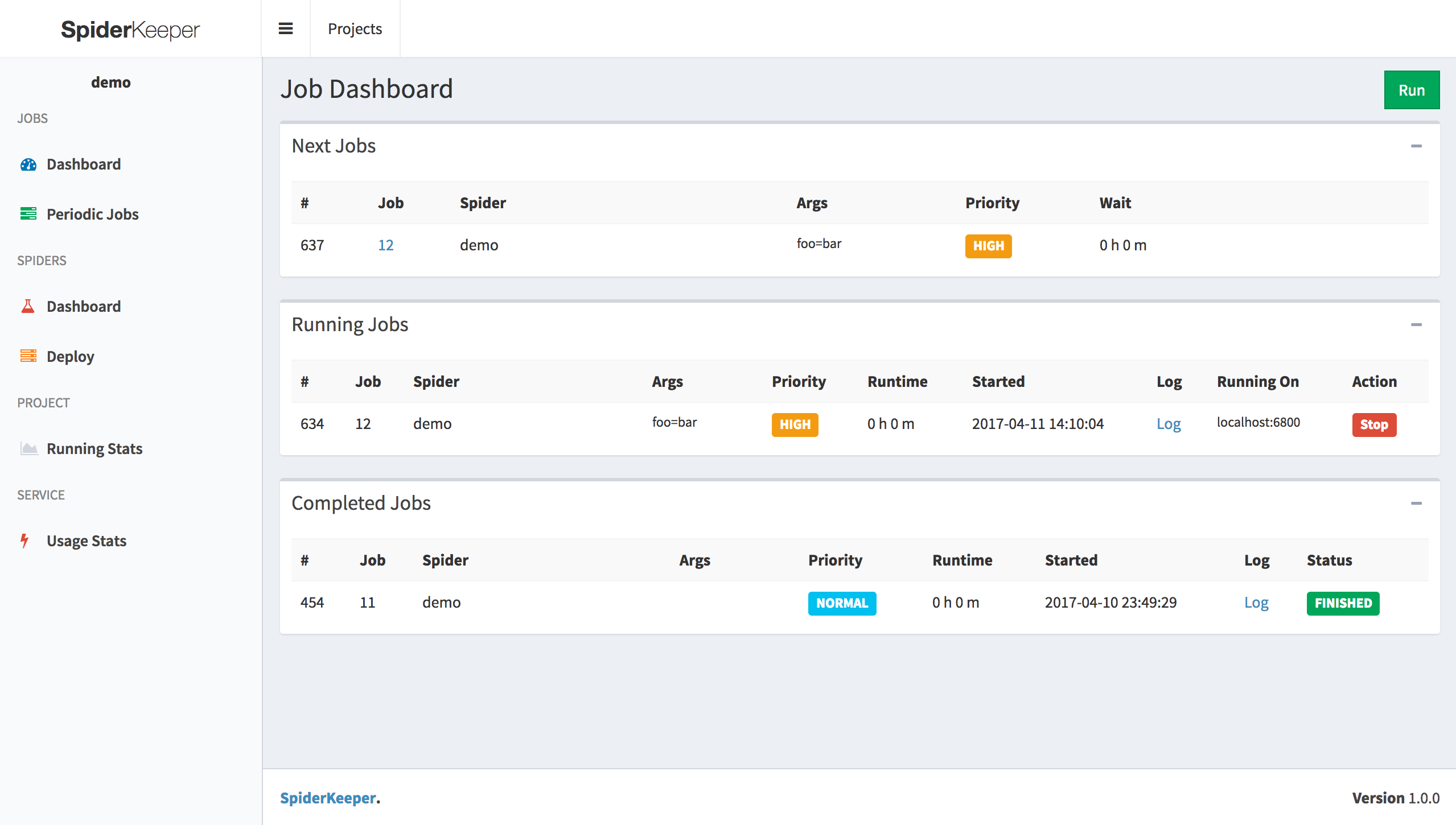

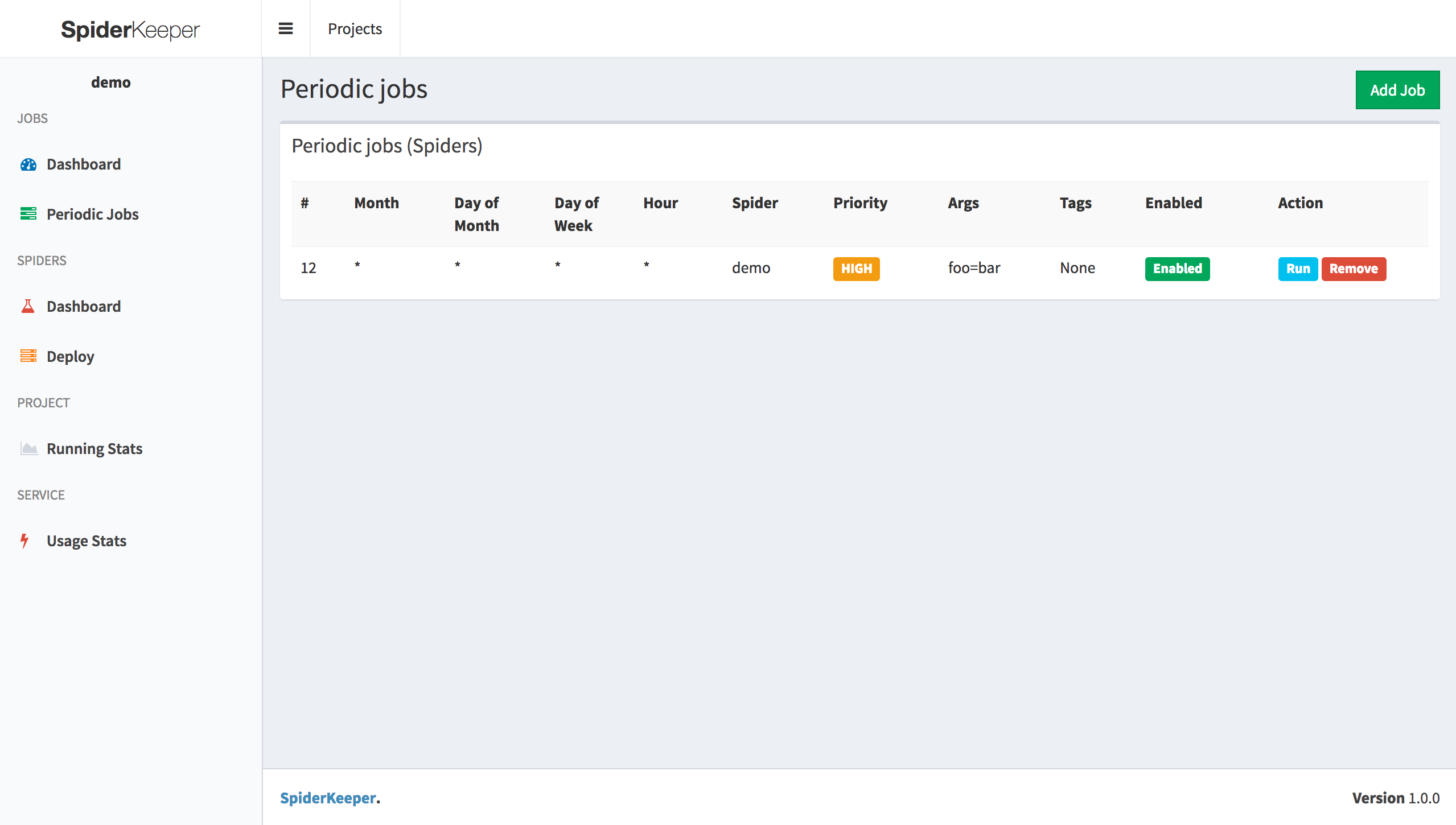

- Manage your spiders from a dashboard. Schedule them to run automatically

- With a single click deploy the scrapy project

- Show spider running stats

- Provide api

Current Support spider service

Screenshot

Getting Started

Installing

pip install spiderkeeper-2-1

Deployment

spiderkeeper [options]

Options:

-h, --help show this help message and exit

--host=HOST host, default:0.0.0.0

--port=PORT port, default:5000

--username=USERNAME basic auth username ,default: admin

--password=PASSWORD basic auth password ,default: admin

--type=SERVER_TYPE access spider server type, default: scrapyd

--server=SERVERS servers, default: ['http://localhost:6800']

--database-url=DATABASE_URL

SpiderKeeper metadata database default: sqlite:////home/souche/SpiderKeeper.db

--no-auth disable basic auth

-v, --verbose log level

--executor ThreadPoolExecutor ,default: 30

--process processpool ,default: 3

example:

spiderkeeper --server=http://localhost:6800

Usage

Visit:

- web ui : http://localhost:5000

1. Create Project

2. Use [scrapyd-client](https://github.com/scrapy/scrapyd-client) to generate egg file

scrapyd-deploy --build-egg output.egg

2. upload egg file (make sure you started scrapyd server)

3. Done & Enjoy it

- api swagger: http://localhost:5000/api.html

Authors

License

This project is licensed under the MIT License.

Contributing

Contributions are welcomed!