Kaskade is a text user interface (TUI) for Apache Kafka, built with Textual by Textualize.

It includes features like:

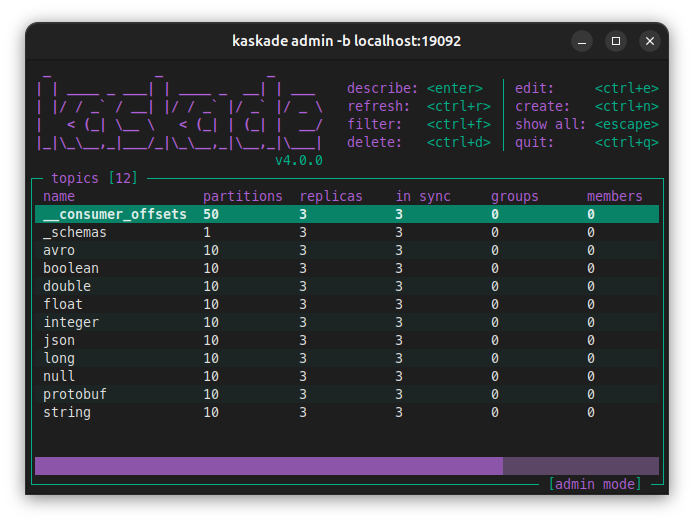

- List topics, partitions, groups and group members.

- Topic information like lag, replicas and records count.

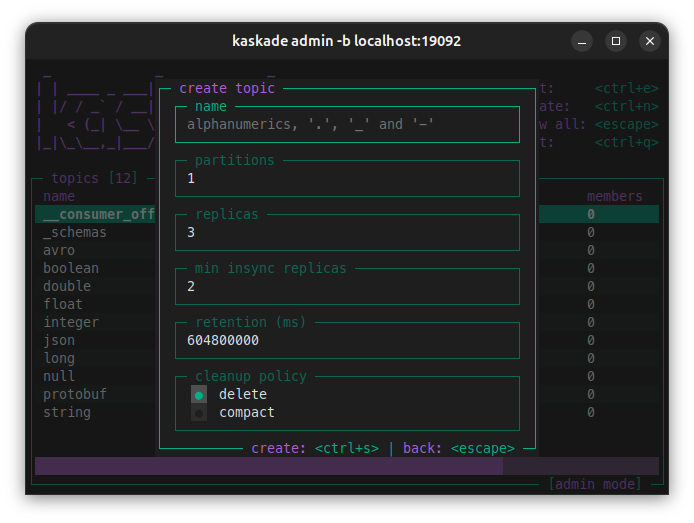

- Create, edit and delete topics.

- Filter topics by name.

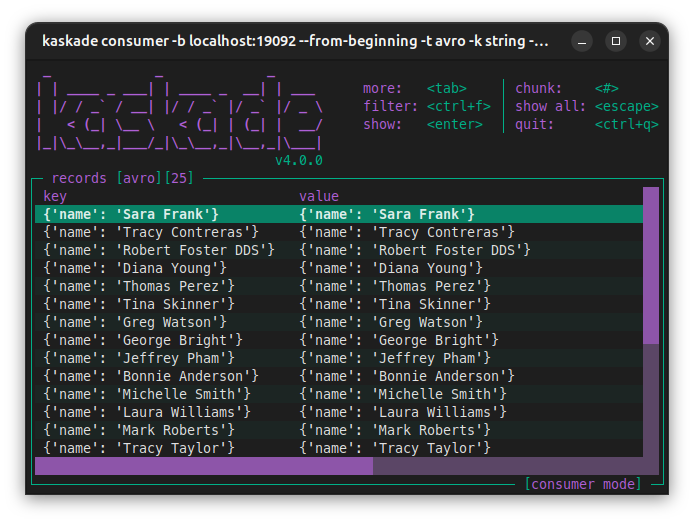

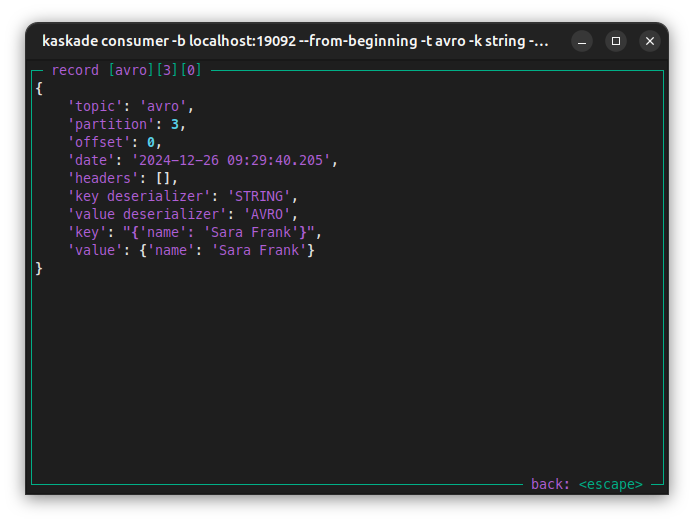

- Json, string, integer, long, float, boolean and double deserialization.

- Filter by key, value, header and/or partition.

- Schema Registry support for avro and json.

- Protobuf deserialization support without Schema Registry.

- Avro deserialization without Schema Registry.

Kaskade does not include:

- Schema Registry for protobuf.

- Runtime auto-refresh.

|

|

|

|

brew install kaskadepipx install kaskadekaskade admin -b my-kafka:9092kaskade consumer -b my-kafka:9092 -t my-topickaskade admin -b my-kafka:9092,my-kafka:9093kaskade consumer -b my-kafka:9092 -t my-json-topic -k json -v jsonSupported deserializers

[bytes, boolean, string, long, integer, double, float, json, avro, protobuf, registry]

kaskade consumer -b my-kafka:9092 -t my-topic --from-beginningkaskade consumer -b my-kafka:9092 -t my-avro-topic \

-k registry -v registry \

--registry url=http://my-schema-registry:8081For more information about Schema Registry configurations go to: Confluent Schema Registry client.

kaskade consumer -b my-kafka:9092 -t my-avro-topic \

-k registry -v registry \

--registry url=http://my-apicurio-registry:8081/apis/ccompat/v7For more about apicurio go to: Apicurio registry.

kaskade admin -b my-kafka:9092 -c security.protocol=SSLFor more information about SSL encryption and SSL authentication go to: Configure librdkafka client.

kaskade admin -b ${BOOTSTRAP_SERVERS} \

-c security.protocol=SASL_SSL \

-c sasl.mechanism=PLAIN \

-c sasl.username=${CLUSTER_API_KEY} \

-c sasl.password=${CLUSTER_API_SECRET}kaskade consumer -b ${BOOTSTRAP_SERVERS} -t my-avro-topic \

-k string -v registry \

-c security.protocol=SASL_SSL \

-c sasl.mechanism=PLAIN \

-c sasl.username=${CLUSTER_API_KEY} \

-c sasl.password=${CLUSTER_API_SECRET} \

--registry url=${SCHEMA_REGISTRY_URL} \

--registry basic.auth.user.info=${SR_API_KEY}:${SR_API_SECRET}More about confluent cloud configuration at: Kafka client quick start for Confluent Cloud.

docker run --rm -it --network my-networtk sauljabin/kaskade:latest \

admin -b my-kafka:9092docker run --rm -it --network my-networtk sauljabin/kaskade:latest \

consumer -b my-kafka:9092 -t my-topicConsume using my-schema.avsc file:

kaskade consumer -b my-kafka:9092 --from-beginning \

-k string -v avro \

-t my-avro-topic \

--avro value=my-schema.avscInstall protoc command:

brew install protobufGenerate a Descriptor Set file from your .proto file:

protoc --include_imports \

--descriptor_set_out=my-descriptor.desc \

--proto_path=${PROTO_PATH} \

${PROTO_PATH}/my-proto.protoConsume using my-descriptor.desc file:

kaskade consumer -b my-kafka:9092 --from-beginning \

-k string -v protobuf \

-t my-protobuf-topic \

--protobuf descriptor=my-descriptor.desc \

--protobuf value=mypackage.MyMessageMore about protobuf and

FileDescriptorSetat: Protocol Buffers documentation.

For Q&A go to GitHub Discussions.

For development instructions see DEVELOPMENT.md.